The Uncomfortable Truth About AI Threat Detection: Why Your Security Stack is Actively Eroding M&A Deal Value

The cybersecurity industry is currently peddling a dangerous, mathematically flawed premise: the idea that purchasing a shiny, new "AI-powered" threat detection platform will insulate your enterprise from risk. It is the modern equivalent of putting a deadbolt on a screen door.

Why 'AI-Powered' is the New Snake Oil

If you are a Private Equity partner managing portfolio risk, or a CISO trying to secure a highly dynamic enterprise, you are being sold a tired narrative. Vendors are promising that AI will act as a magic shield against external adversaries. The uncomfortably honest truth is that while you are writing seven-figure checks for defensive AI, your own employees are rapidly deploying autonomous "Agentic AI" workflows that your new security stack cannot even see, let alone govern.

We need to stop talking about AI as merely a defensive tool and start treating it as what it actually is: an autonomous, rapidly expanding threat vector that is actively eroding your enterprise value and destroying M&A deal multiples.

Organizations using AI-powered threat detection report a 65% reduction in time-to-detection and a 40% decrease in false positives compared to traditional security systems.

The Agitation: Cascading Failures and The Destruction of Portfolio Value

Let’s apply the psychological principle of Loss Aversion to your current security posture. Loss Aversion dictates that the pain of losing capital is twice as psychologically powerful as the joy of gaining it. Private Equity firms are entirely focused on the "Joy of Gain"—scaling EBITDA and aggressively pursuing add-on acquisitions.

But here is the Cost of Inaction (COI) that is bleeding your portfolio dry: 72% of private equity firms across the US and Europe have reported a serious cyber incident at a portfolio company in the past three years, with an average cost of $3.4 million per incident.

The introduction of Agentic AI exponentially amplifies this risk through "cascading failures". In a multi-agent system, an orchestration agent might hold the API keys for five other downstream operational systems. If that single orchestration agent is compromised via a prompt injection attack, the adversary instantly gains access to your entire ecosystem.

This is not a hypothetical academic exercise. In a recent controlled red-team engagement, McKinsey’s internal AI platform was completely compromised by an autonomous agent that gained broad system access in under two hours. Agentic attacks traverse systems and exfiltrate data at machine speed, long before a human SOC analyst receives an alert.

Furthermore, "automation bias"—the human tendency to blindly trust machine output over human judgment—is creating massive legal liabilities. Employees are using AI to automatically fill out complex security questionnaires. When an AI "hallucinates" an encryption standard you do not actually possess, it immediately creates a binding contractual liability that your organization cannot meet. During M&A technical due diligence, these undiscovered vulnerabilities and false claims destroy post-closing value through expensive remediation, regulatory fines, and friction during system integration.

Example: AI Detection Algorithm

// Simplified AI threat scoring

function calculateThreatScore(event) {

const features = extractFeatures(event);

const mlScore = model.predict(features);

const riskScore = applyRiskFactors(mlScore);

return {

score: riskScore,

confidence: mlScore.confidence,

action: riskScore > 0.8 ? 'block' : 'monitor'

};

}How AI is Transforming Security

AI-powered security systems excel in several key areas:

- Behavioral Analysis: AI systems learn normal user and system behavior, instantly flagging deviations that could indicate compromise.

- Predictive Threat Intelligence: Machine learning models can predict attack vectors before they're exploited based on global threat data.

- Automated Response: AI can automatically contain threats, isolate affected systems, and initiate remediation protocols in milliseconds.

The counter-intuitive insight that separates elite security leaders from the rest of the market is this: AI security is not about keeping the hackers out; it is about strictly governing what your autonomous systems are permitted to give away.

Your threat detection tools are looking outward. But the real threat is "Shadow AI"—the unvetted, unauthorized use of AI tools by your own deal teams, analysts, and developers. An analyst using a consumer-grade AI tool to summarize highly confidential Limited Partner (LP) communications or proprietary deal flow is bypassing your entire multimillion-dollar perimeter defense.

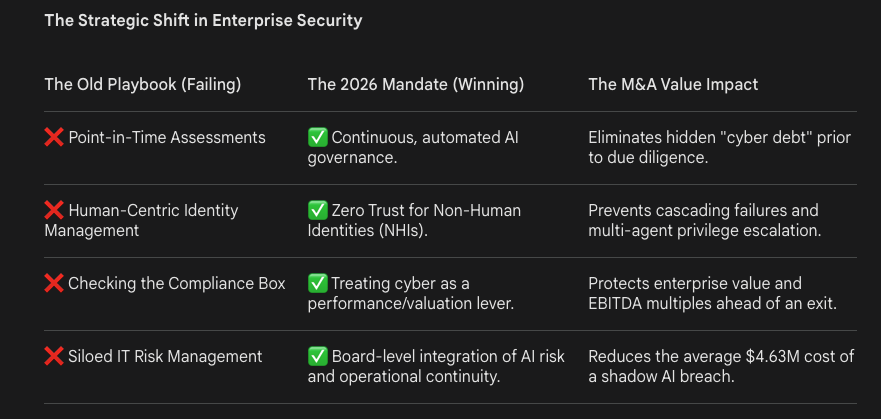

The old playbook of identifying threats and patching software is dead. The new mandate is continuous governance and establishing Zero Trust for Non-Human Identities (NHIs).

The Strategic Shift in Enterprise Security

"If your M&A due diligence relies on static, point-in-time security assessments, you aren't just acquiring a company—you are inheriting an autonomous, unmanaged threat vector that will dictate your next valuation."

The Solution: A Consultative Framework for CISOs and PE Partners

To stop the bleeding and protect your enterprise value, you must fundamentally restructure how you view AI deployment and threat detection. Move away from generic SaaS tools and implement a strict, continuous governance framework.

Here is your immediate operational checklist:

- Eradicate Shadow AI: Before you buy another external threat detection platform, audit your internal environment. Implement continuous monitoring to discover every unauthorized AI agent currently touching your proprietary data.

- Implement Zero Trust for Agents (NHIs): Do not give an AI agent standing privileges. Treat every autonomous agent as a hostile actor. Enforce strict, time-bound access controls and continuously monitor the "agentic loop" to ensure tools cannot independently escalate privileges.

- Restructure M&A Due Diligence: If you are acquiring a company, traditional financial diligence is no longer enough. You must conduct a deep-dive technical audit to uncover their hidden AI risk, hallucinated security liabilities, and technical debt before you sign the Letter of Intent.

- Assume Breach at the Agent Level: Architect your systems with the assumption that your AI will be manipulated. Build human-in-the-loop fail-safes for all high-stakes data transactions to counter automation bias.

The cost of inaction is no longer a theoretical debate about future risk; it is a measurable deduction on your current term sheet. AI agents are already embedded in your business-critical processes, and security cannot be retrofitted after the fact. You can either govern your AI autonomously, or you can let it dictate your next valuation. Choose wisely.

.png)