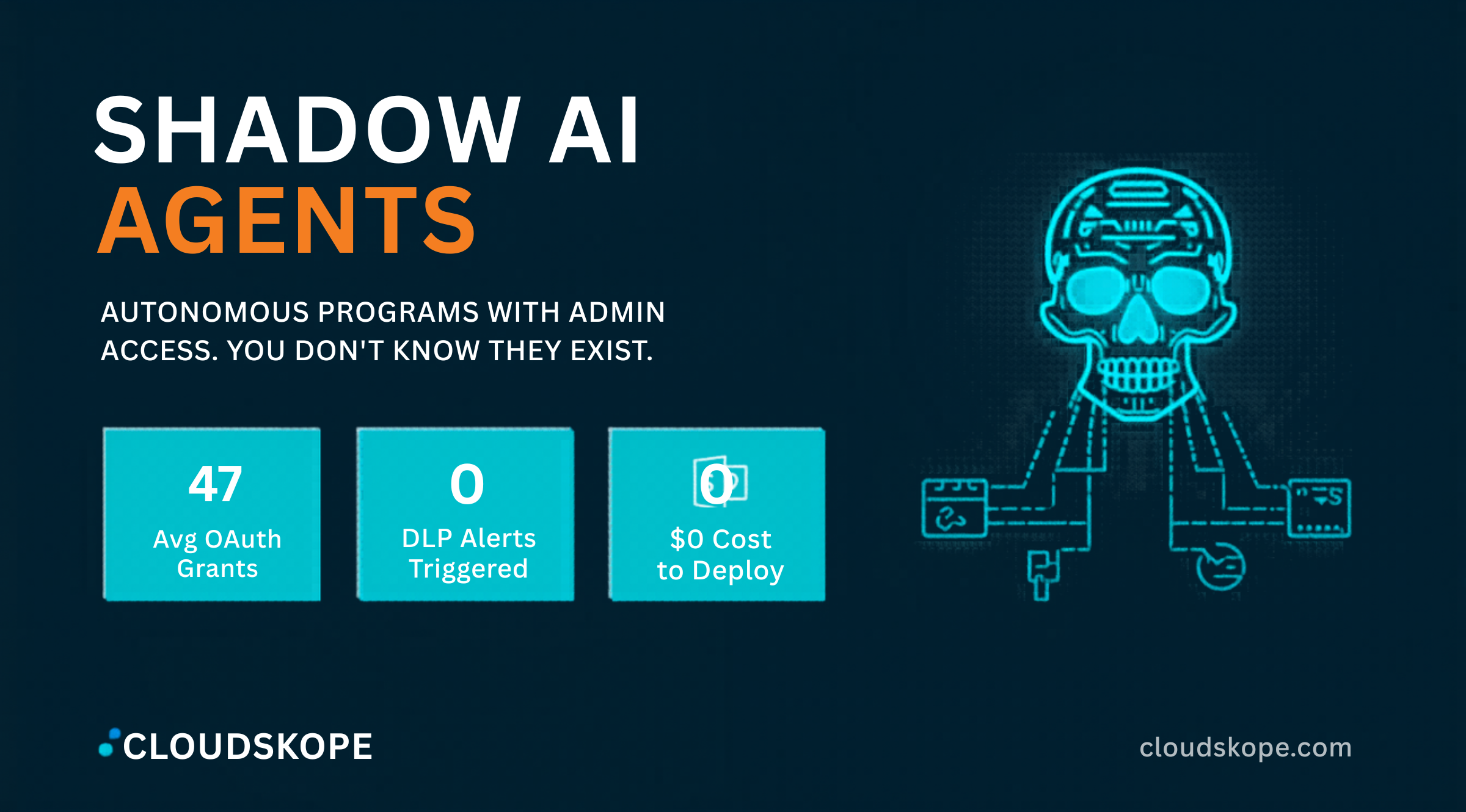

Your Employees Are Running Shadow AI Agents With Admin Access to Everything. You Don't Know About Any of Them.

Your security team spent Q1 of 2026 reviewing the AI tools your employees are using with corporate data. They identified the ChatGPT Enterprise usage, the Microsoft Copilot deployment, the GitHub Copilot licenses. They implemented an AI governance policy. They held a training session. Meanwhile, your revenue operations director connected an autonomous AI agent to your CRM, your marketing database, your email system, and your LinkedIn Sales Navigator account — and scheduled it to run every 15 minutes. The agent has OAuth tokens with delegated access to all four systems. It has been running for 11 months. Nobody on your security team knows it exists.

What Shadow AI Agents Are and Why They Are Structurally Different From Shadow IT

Shadow IT — employees using unauthorized software as an extension of their work — has existed since the early days of consumer SaaS. Security teams learned to manage it through cloud access security brokers, network monitoring, and acceptable use policies. Shadow AI agents are categorically different in ways that existing shadow IT controls do not address.

The Autonomy Dimension

Shadow IT applications are passive — they process data when a human interacts with them. Shadow AI agents are autonomous — they take action on a schedule, a trigger, or continuously, without any human in the loop at the moment of action. An AI agent connected to your CRM does not wait for an employee to interact with it. It queries the CRM database on its defined schedule. It reads customer records. It sends emails from the connected mailbox. It updates opportunity stages. It exports data based on its programmatic logic. All of this happens whether the employee who configured it is at their desk, on vacation, or has already left the company.

The Access Scope Problem

AI agents access enterprise systems through OAuth authorization grants — the same mechanism that allows apps like DocuSign or Zoom to integrate with Microsoft 365. When an employee authorizes an AI agent to access their email, they grant the agent an OAuth token with the permissions scope of their own account. If the employee is an administrator, the agent has administrative access. If the employee has access to sensitive financial data, the agent has access to sensitive financial data.

Unlike human access, OAuth tokens granted to AI agents do not expire by default. They persist until explicitly revoked. An employee who configured an AI agent and subsequently left the company has left behind an OAuth token that continues to provide the agent — and whatever third party operates it — with ongoing access to corporate systems. The token is still valid. The agent may still be running. The former employee's access has been deprovisioned. The agent's has not.

What These Agents Are Actually Doing

The AI agent ecosystem in 2026 has expanded dramatically beyond productivity tools. Agents are connecting to corporate systems for sales outreach automation (accessing CRM, email, LinkedIn), financial operations (connecting to ERP systems, accounting software, AP/AR workflows), HR operations (accessing employee records, payroll systems, applicant tracking), and customer success (reading customer communication history, sending communications from company addresses). These are not trivial integrations. These agents are operating as digital employees with access to some of the most sensitive systems in the enterprise, under no more oversight than the individual employee who authorized them.

An AI agent authorized with an employee's OAuth credentials operates with that employee's access level, on a schedule you did not set, to destinations you did not audit, even after that employee leaves your company. It is not shadow IT. It is an unsupervised digital employee with admin-level access and no termination date.

The Security Threat Vectors

The security risk from shadow AI agents is not primarily that the agents themselves are malicious. The risk manifests through several mechanisms that existing security controls are not positioned to catch.

Data Exfiltration Without DLP Alerts

Data Loss Prevention systems are configured to detect unusual data transfer patterns — large bulk downloads, transfers to personal email accounts, uploads to unauthorized cloud storage. AI agents circumvent DLP controls because their data access patterns look identical to normal application API usage. An AI agent that connects to Salesforce and exports customer data on a nightly basis is indistinguishable from Salesforce's own reporting and analytics functions from a network traffic perspective. The API calls look correct. The authentication is valid. The access is authorized by the OAuth grant. DLP does not fire. The data leaves anyway.

Supply Chain Exposure Through Agent Platforms

Most AI agents are built on third-party platforms — n8n, Zapier, Make, custom implementations using OpenAI or Anthropic APIs, or purpose-built vertical AI agent tools. When an employee authorizes an AI agent built on a third-party platform, the OAuth token granting corporate system access is stored in that third-party platform's infrastructure. If the third-party platform experiences a breach, the attacker gains access to the OAuth tokens of every enterprise customer whose employees authorized agents on that platform.

The M&A Due Diligence Exposure

For private equity acquirers, shadow AI agent inventory has become a material pre-close diligence requirement that almost no diligence process currently includes. When you acquire a company, you acquire all active OAuth grants to all systems. Some of those grants authorize AI agents that are actively exfiltrating data to third-party platforms outside your new organization's security perimeter. Post-close discovery of unauthorized AI agents is an increasingly common finding in post-acquisition security assessments. The scope of access these agents had — and what they exported — cannot always be reconstructed from available logging.

Why Banning Does Not Work

Google's security research team made a point that most corporate AI policies have not absorbed: banning AI agents does not prevent their use. It prevents their use on corporate networks and devices. Users who are blocked from deploying AI agents through corporate channels deploy them through personal accounts, personal API keys, and browser-based tools that access corporate systems through legitimately authorized browser sessions. The agent still runs. Corporate system access still occurs. The IT team now has even less visibility. The appropriate response is to design a controlled path that is more attractive than the uncontrolled alternative.

What a Mature AI Agent Governance Program Looks Like

The organizations that will be ahead of this problem in 2027 are the ones building governance frameworks now, before the volume of shadow agents makes retroactive discovery prohibitively expensive.

A functional AI agent governance program starts with an OAuth grant inventory. Microsoft Entra ID and Google Workspace both provide administrative views of all OAuth application grants within the tenant. Running a full OAuth grant audit — identifying every application, every permission scope, every grant date, and every user who authorized it — is the first step in understanding the current exposure. In most mid-market environments, this audit surfaces dozens to hundreds of OAuth grants that IT and security teams have no record of reviewing.

The second component is an agent registry and approval process. Organizations need a lightweight process that allows employees to submit AI agent requests, receive an expedited security review of the platform and requested permissions, and get approval with appropriate controls (limited permission scope, defined session duration, logging requirements). The approval process needs to be fast enough that employees use it rather than circumventing it.

The third component is offboarding integration. Every employee offboarding process should include an explicit step: inventory AI agents authorized by this employee, revoke OAuth grants associated with those agents, and determine whether any running agents should be reassigned to an authorized owner rather than terminated. This step is currently absent from virtually every offboarding checklist Cloudskope reviews. The fourth component is ongoing monitoring for agent behavior anomalies — an agent that has historically exported 1,000 CRM records per day and suddenly exports 50,000 is detectable once a registry and behavioral baseline exist.

Shadow AI agents are the shadow IT problem of 2026 — except that they have broader access, act autonomously, generate no traditional security signals, and persist indefinitely through OAuth grants that your offboarding process does not address. The organizations that recognize this as a governance problem and build accordingly will be the ones that can explain, after their next M&A deal, exactly what AI agents were running and what they had access to.

Cloudskope's Identity and Access Risk Assessment includes a full OAuth grant inventory and AI agent exposure analysis across Microsoft 365, Google Workspace, and connected SaaS platforms. For PE sponsors, our M&A Cyber Due Diligence program specifically inventories AI agent grants and autonomous process access as part of pre-close technical review — giving you visibility into the digital employees you are about to inherit.

.png)

.png)